AI Transparency Portal

Building trust through accountability, fairness, and transparency.

A consortium of companies and organizations defending the future of responsible engineering practices.

ACTIONS TAKEN

FUTURES SAFEGUARDS

STAKEHOLDER FEEDBACK

Bias Audit Findings

2025

Audit conducted by independent 3rd party, Q1 2025.

The independent review of AI hiring platforms revealed measurable disparities in outcomes across demographic groups. The audit found that male candidates were recommended for advancement 14% more frequently than female candidates with comparable qualifications. In addition, candidates from underrepresented ethnic backgrounds faced a 9% lower selection rate, highlighting gaps in dataset diversity and algorithmic weighting.

While these findings underscore significant shortcomings, the review also noted areas of progress. Aperture’s models demonstrated strong consistency in evaluating educational background and work experience across all applicant groups, suggesting that targeted improvements in data sourcing, bias detection, and validation processes could substantially improve fairness. These results form the foundation of the company’s ongoing corrective measures and long-term safeguards.

Actions Taken

Immediate Corrective Actions

Restructured tech teams with ethics experts included.

Expanded datasets to improve representation.

Real-time bias monitoring tools deployed internally.

Two thirds of respondents are concerned about the impact of AI on the human race.

How concerned are you that AI will have a negative impact on the human race?

A1 Very concerned 22%

A2 Somewhat concerned 46%

A3 Not really concerned 25%

A4 I don’t have an opinion 7%

Sixty-eight percent of respondents are somewhat or very concerned about the negative impact of AI on the human race, compared to one in four who are unconcerned.

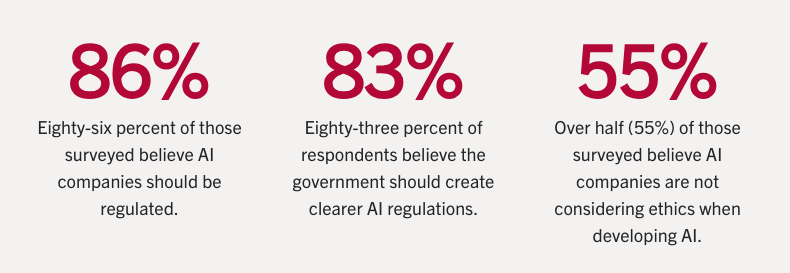

Stats, content, and infographics from Santa Clara University’s Markkula Center for Applied Ethics

Future Safeguards

Commitments Going Forward

Quarterly public bias audits (next release: Q4 2025)

Creation of an Independent Ethics Advisory Council

Open API access for external researchers

Annual public report with metrics and progress

To ensure that fairness remains a measurable standard, Aperture Dynamics will publish quarterly public bias audits beginning in Q4 2025. An Independent Ethics Advisory Council, comprised of external experts and community representatives, will review results and provide recommendations directly to executive leadership. In addition, an open API access program will allow approved researchers to independently test the company’s AI systems, creating an extra layer of accountability.

Beyond technical fixes, Aperture is embedding ethics into its organizational culture. All product teams will undergo mandatory ethics and bias training, supported by new governance protocols that require ethical sign-offs at each stage of product development. Together, these safeguards are designed to make transparency not just a corrective step, but a permanent part of how Aperture Dynamics innovates.

Stakeholder Feedback

Commitments Going Forward

“Transparency is the right move — it raises the bar for the industry.” – Independent AI Ethics Alliance

Early responses to this AI Transparency Portal initiative have been mixed but constructive. Independent watchdog groups and advocacy organizations have praised the company’s willingness to share bias audit results publicly, calling it a “meaningful step toward accountability” and a potential model for the broader tech industry. Several analysts have noted that by formalizing safeguards, Aperture has an opportunity to rebuild trust and distinguish itself as a leader in ethical AI.

At the same time, some stakeholders remain cautious. Market observers have pointed out that transparency alone will not address structural issues unless it is paired with measurable improvements in outcomes. Others have raised concerns about whether competitors might use disclosed vulnerabilities to their advantage. These perspectives underscore the importance of consistent follow-through — stakeholders want to see not just promises, but sustained results over time.

About the Transparency Consortium

The Transparency Consortium was formed in response to the growing need for independent oversight and accountability in the development of artificial intelligence systems. Comprised of external ethics experts, academic researchers, industry partners, and community representatives, the consortium works alongside Aperture Dynamics to evaluate bias audits, investigate potential risks, and provide impartial guidance. By combining technical expertise with diverse perspectives, the group ensures that ethical considerations are embedded into every stage of product development.

Through regular independent investigations, open reporting, and public engagement, the consortium offers more than compliance — it establishes a model for how responsible engineering can shape the future of AI. By publishing findings and recommendations in partnership with Aperture Dynamics, the consortium promises to make transparency and fairness a permanent standard across the industry. Its mission is clear: to build technologies that serve society with trust, accountability, and responsibility at their core.

Transparency Consortium Founding Members

Center for Algorithmic Integrity (CAI) – an independent nonprofit dedicated to auditing and certifying AI systems for fairness and accountability.

Ethics in Innovation Forum (EIF) – a global network of scholars and technologists advancing responsible engineering practices.

Institute for Responsible Systems Design (IRSD) – a policy and research institute focused on building governance frameworks for emerging technologies.

Civic Data Trust Alliance (CDTA) – a coalition advocating for transparency and equitable data practices across industries.

Global Council on AI Standards (GCAS) – an international body of industry leaders and regulators developing shared principles for trustworthy AI.

Society for Technology and Human Values (STHV) – a community-driven watchdog group ensuring that human rights remain central in AI adoption.

Disclaimer: This Transparency Portal is a design artifact created for The Bias Breakpoint, a SpacetimeGame™ scenario. It is not an actual product or initiative, but a simulated example used for experiential learning and leadership training. Excluding the actual content from Santa Clara University’s Markkula Center for Applied Ethics, all names, organizations, and content are fiction, designed to demonstrate how companies might respond to complex challenges in artificial intelligence ethics.